Rethinking Infrastructure Boundaries for Graph Analytics

The Graph-Massivizer project reimagines how large-scale graph data is processed by leveraging the computing continuum, a seamless integration of edge, cloud, and high-performance computing (HPC) environments. Unlike traditional siloed infrastructures, the continuum enables cross-layer orchestration, where graph workloads dynamically shift between infrastructure tiers based on real-time latency, energy, and cost trade-offs. This shift is crucial for modern data-intensive applications, where graph-based workloads, ranging from social network analysis to anomaly detection and recommendation systems, demand high throughput, low latency, and adaptive execution environments.

Why Graph Workloads Need the Continuum

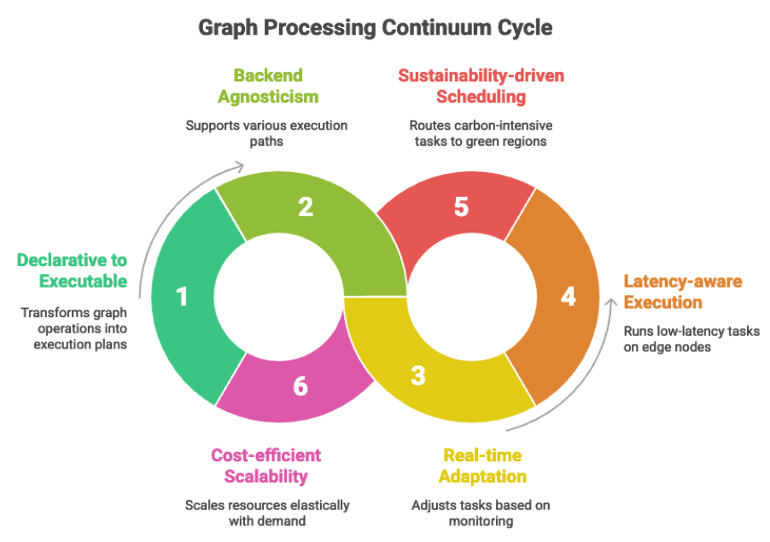

Graph analytics pose unique challenges: irregular computation patterns, data dependencies, and unpredictable workloads. A single computation can touch millions of nodes and edges, triggering cascading execution chains. The continuum provides the flexibility needed to manage this complexity:

- Latency-aware execution: Low-latency components like subgraph filtering or anomaly inference run directly on edge nodes (e.g., Jetson, Raspberry Pi), minimizing data transfer.

- Sustainability-driven scheduling: Carbon-intensive operations, such as matrix multiplications or full-graph traversals, are routed to green cloud regions or HPC clusters with monitored energy efficiency.

- Cost-efficient scalability: By adopting serverless computing, resources scale elastically with demand, avoiding idle infrastructure and reducing operational overhead.

Graph-Choreographer: The Serverless Orchestrator of the Continuum

At the heart of this adaptive orchestration lies Graph-Choreographer, the execution backbone of the Graph-Massivizer toolkit. It bridges graph workflows, known as basic graph operations (BGOs), with serverless, Kubernetes-native deployment across heterogeneous nodes.

Key Features

- Declarative to Executable: Transforms high-level BGO workflows into executable DAGs using orchestration services like HEFTLess and EnergyLess.

- Backend Agnosticism: Dynamically selects between Argo Workflows and OpenFaaS, enabling stateful or stateless execution depending on runtime conditions.

- Real-time Adaptation: Monitors energy draw, CO2 intensity, and performance KPIs via Prometheus, Kepler, and PowerJoular, enabling runtime adaptations like reassigning tasks, throttling concurrency, or reprioritizing stages.

Graph Processing Continuum Cycle

The Next Frontier

The computing continuum challenges us to rethink the boundaries of infrastructure, not as isolated layers, but as interconnected zones of opportunity. For graph processing, this means more than just faster analytics; it means smarter deployments, energy-aware execution, and systems that respond to both workload and world conditions. As data grows in scale and complexity, our tools must evolve to be not only scalable and sustainable but also intelligent. Embracing the continuum is not just a technical decision; it is a strategic shift toward architectures that are adaptive by design and responsible by default. By uniting serverless computing, edge intelligence, and green HPC under a common orchestration model, projects like Graph-Massivizer pave the way for a new generation of data-driven systems that are both high-performing and environmentally conscious. The path forward is clear: if our data spans the continuum, so must our computation.

Author:

Dr. Reza Farahani

University of Klagenfurt, Austria